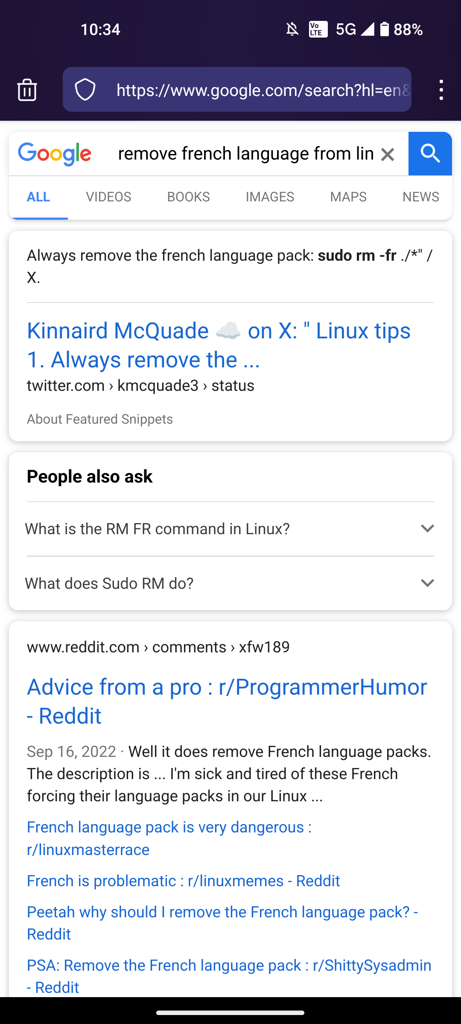

So this is weirder than it looks at a glance.

That is not an LLM-generated search result. That is a funny ha-ha mistake a LLM made that then some guy compiled in his blog about AI.

Google then did their usual content-stealing thing, which probably does involve some ML, but not in the viral ChatGPT way and made that card by quoting the blog quoting the LLM making the mistake. And then everybody quoted that because it’s weird and funny and it replicates all the viral paranoia about this stuff.

Is this how we beat the AI invasion? Data poisoning with memes and jokes?

Yes.

I mean, as long as you are ok with also nuking all search engines.

To be honest, text chatbots have done very little to move the needle one way or the other, and all search engines are barely usable right now, chatbots or no. I had some hopes for an AI implementation with speciific training on how to parse search results, but all we’re getting is the first couple of results read back to us.

So yeah, I get that people needed a new bad guy after crypto imploded, but it’s a shame that the discourse became what it is, in that it both fails to pay off on tech that is actually pretty cool when used right and it leaves a lot of old tech that is getting noticeably worse off the hook.

this deletes your OS right

It will try but unfortunately in the process of deleting your os the shell process of deleting will be affected and stop there.

However it can be savely assumed that you won’t be able to boot into it again and that your data is gone.

Isn’t the shell process loaded into RAM? In fact the entire session is, wouldn’t it be fine until you try to access a file somehow?

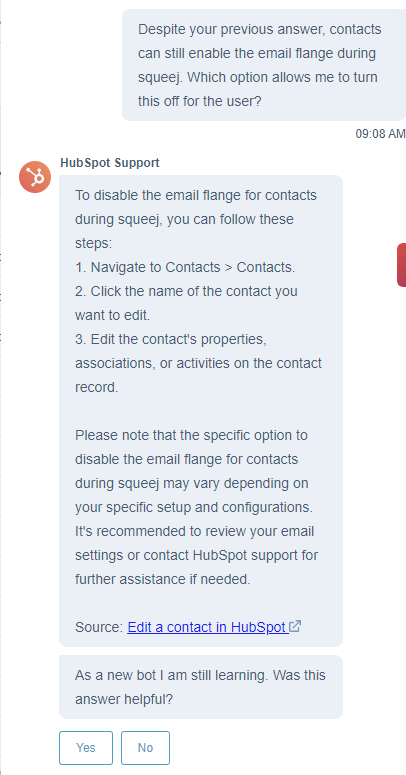

Hubspot AI chat bot told me to go three levels deep into a menu that doesn’t exist, to click a button that doesn’t exist to enable a service that doesn’t exist to solve a problem I had.

My company pays a 5-figure yearly sum for this service 👍

The way it was going, I thought you were going to solve a problem you didn’t have. Would be fitting.

maybe I should start asking it impossible questions

“how do I stop contacts from enabling the email flange during the squeej phase of marketing?”

edit: gottem lmao

This was incredible.

No matter how dumb AI is, it will be an improvement over a lot of people.

You could say the same about can openers or shelves, though 🤷

I’m not sure if you’re praising or damning can openers

Neither. Just pointing out that some people are less useful than even the simplest and most circumstantial technology 🤷