Thank fucking god.

I got sick of the overhyped tech bros pumping AI into everything with no understanding of it…

But then I got way more sick of everyone else thinking they’re clowning on AI when in reality they’re just demonstrating an equal sized misunderstanding of the technology in a snarky pessimistic format.

I’m more annoyed that Nvidia is looked at like some sort of brilliant strategist. It’s a GPU company that was lucky enough to be around when two new massive industries found an alternative use for graphics hardware.

They happened to be making pick axes in California right before some prospectors found gold.

And they don’t even really make pick axes, TSMC does. They just design them.

They just design them.

It’s not trivial though. They also managed to lock dev with CUDA.

That being said I don’t think they were “just” lucky, I think they built their luck through practices the DoJ is currently investigating for potential abuse of monopoly.

Yeah CUDA, made a lot of this possible.

Once crypto mining was too hard nvidia needed a market beyond image modeling and college machine learning experiments.

They didn’t just “happen to be around”. They created the entire ecosystem around machine learning while AMD just twiddled their thumbs. There is a reason why no one is buying AMD cards to run AI workloads.

One of the reasons being Nvidia forcing unethical vendor lock in through their licensing.

I feel like for a long time, CUDA was a laser looking for a problem.

It’s just that the current (AI) problem might solve expensive employment issues.

It’s just that C-Suite/managers are pointing that laser at the creatives instead of the jobs whose task it is to accumulate easily digestible facts and produce a set of instructions. You know, like C-Suites and middle/upper managers do.

And NVidia have pushed CUDA so hard.AMD have ROCM, an open source cuda equivalent for amd.

But it’s kinda like Linux Vs windows. NVidia CUDA is just so damn prevalent.

I guess it was first. Cuda has wider compatibility with Nvidia cards than rocm with AMD cards.

The only way AMD can win is to show a performance boost for a power reduction and cheaper hardware. So many people are entrenched in NVidia, the cost to switching to rocm/amd is a huge gamble

Imo we should give credit where credit is due and I agree, not a genius, still my pick is a 4080 for a new gaming computer.

Go ahead and design a better pickaxe than them, we’ll wait…

Go ahead and design a better pickaxe than them, we’ll wait…

Same argument:

“He didn’t earn his wealth. He just won the lottery.”

“If it’s so easy, YOU go ahead and win the lottery then.”

My fucking god.

“Buying a lottery ticket, and designing the best GPUs, totally the same thing, amiriteguys?”

In the sense that it’s a matter of being in the right place at the right time, yes. Exactly the same thing. Opportunities aren’t equal - they disproportionately effect those who happen to be positioned to take advantage of them. If I’m giving away a free car right now to whoever comes by, and you’re not nearby, you’re shit out of luck. If AI didn’t HAPPEN to use massively multi-threaded computing, Nvidia would still be artificial scarcity-ing themselves to price gouging CoD players. The fact you don’t see it for whatever reason doesn’t make it wrong. NOBODY at Nvidia was there 5 years ago saying “Man, when this new technology hits we’re going to be rolling in it.” They stumbled into it by luck. They don’t get credit for forseeing some future use case. They got lucky. That luck got them first mover advantage. Intel had that too. Look how well it’s doing for them. Nvidia’s position over AMD in this space can be due to any number of factors… production capacity, driver flexibility, faster functioning on a particular vector operation, power efficiency… hell, even the relationship between the CEO of THEIR company and OpenAI. Maybe they just had their salespeople call first. Their market dominance likely has absolutely NOTHING to do with their GPU’s having better graphics performance, and to the extent they are, it’s by chance - they did NOT predict generative AI, and their graphics cards just HAPPEN to be better situated for SOME reason.

they did NOT predict generative AI, and their graphics cards just HAPPEN to be better situated for SOME reason.

This is the part that’s flawed. They have actively targeted neural network applications with hardware and driver support since 2012.

Yes, they got lucky in that generative AI turned out to be massively popular, and required massively parallel computing capabilities, but luck is one part opportunity and one part preparedness. The reason they were able to capitalize is because they had the best graphics cards on the market and then specifically targeted AI applications.

deleted by creator

His engineers built it, he didn’t do anything there

As I job-hunt, every job listed over the past year has been “AI-driven [something]” and I’m really hoping that trend subsides.

“This is an mid level position requiring at least 7 years experience developing LLMs.” -Every software engineer job out there.

That was cloud 7 years ago and blockchain 4

Reminds me of when I read about a programmer getting turned down for a job because they didn’t have 5 years of experience with a language that they themselves had created 1 to 2 years prior.

Yeah, I’m a data engineer and I get that there’s a lot of potential in analytics with AI, but you don’t need to hire a data engineer with LLM experience for aggregating payroll data.

there’s a lot of potential in analytics with AI

I’d argue there is a lot of potential in any domain with basic numeracy. In pretty much any business or institution somebody with a spreadsheet might help a lot. That doesn’t necessarily require any Big Data or AI though.

The tech bros had to find an excuse to use all the GPUs they got for crypto after they bled that dry

If that’s the reason, I wouldn’t even be mad, that’s recycling right there.

The tech bros had to find an excuse to use all the GPUs they got for crypto after they

bled that dryupgraded to proof-of-stake.I don’t see a similar upgrade for “AI”.

And I’m not a fan of BTC but $50,000+ doesn’t seem very dry to me.

No, it’s when people realized it’s a scam

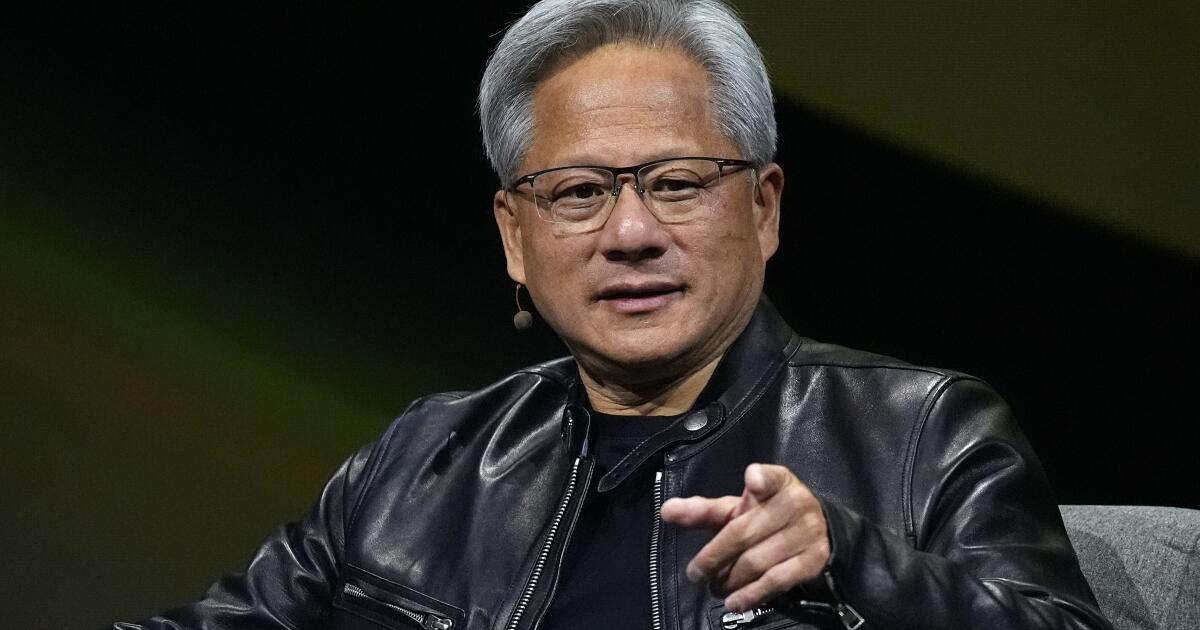

Shed a tear, if you wish, for Nvidia founder and Chief Executive Jenson Huang, whose fortune (on paper) fell by almost $10 billion that day.

Thanks, but I think I’ll pass.

I’m sure he won’t mind. Worrying about that doesn’t sound like working.

I work from the moment I wake up to the moment I go to bed. I work seven days a week. When I’m not working, I’m thinking about working, and when I’m working, I’m working. I sit through movies, but I don’t remember them because I’m thinking about work.

- Huang on his 14 hour workdays

It is one way to live.

That sounds like mental illness.

ETA: Replace “work” in that quote with practically any other activity/subject, whether outlandish or banal.

I sit through movies but I don’t remember them because I’m thinking about baking cakes.

I sit through movies but I don’t remember them because I’m thinking about traffic patterns.

I sit through movies but I don’t remember them because I’m thinking about cannibalism.

I sit through movies but I don’t remember them because I’m thinking about shitposting.

Obsessed with something? At best, you’re “quirky” (depending on what you’re obsessed with). Unless it’s money. Being obsessed with that is somehow virtuous.

Valid argument for sure

It would be sad if therapists kept telling him that but he could never remember

“Sorry doc, was thinking about work. Did you say something about line go up?”

I don’t think you become the best tech CEO in the world by having a healthy approach to work. He is just wired differently, some people are just all about work.

Some would not call that living

Yeah ok sure buddy, but what do you DO actually?

Psychosis doesn’t justify extreme privilege.

People like that consider scrolling xitter work

He knows what this hype is, so I don’t think he’d be upset. Still filthy rich when the bubble bursts, and that won’t be soon.

My only real hope out of this is that that copilot button on keyboards becomes the 486 turbo button of our time.

Meaning you unpress it, and computer gets 2x faster?

Actually you pressed it and everything got 2x slower. Turbo was a stupid label for it.

I could be misremembering but I seem to recall the digits on the front of my 486 case changing from 25 to 33 when I pressed the button. That was the only difference I noticed though. Was the beige bastard lying to me?

Lying through its teeth.

There was a bunch of DOS software that runs too fast to be usable on later processors. Like a Rouge-like game where you fly across the map too fast to control. The Turbo button would bring it down to 8086 speeds so that stuff is usable.

Damn. Lol I kept that turbo button down all the time, thinking turbo = faster. TBF to myself it’s a reasonable mistake! Mind you, I think a lot of what slowed that machine was the hard drive. Faster than loading stuff from a cassette tape but only barely. You could switch the computer on and go make a sandwich while windows 3.1 loads.

Oh, yeah, a lot of people made that mistake. It was badly named.

TIL, way too late! Cheers mate

Actually you used it correctly. The slowdown to 8086 speeds was applied when the button was unpressed.

When the button was pressed the CPU operated at its normal speed.

On some computers it was possible to wire the button to act in reverse (many people did not like having the button be “on” all the time, as they did not use any 8086 apps), but that was unusual. I believe that’s was the case with OPs computer.

It varied by manufacturer.

Some turbo = fast others turbo = slow.

That’s… the same thing.

Whops, I thought you were responding to the first child comment.

I was thinking pressing it turns everything to shit, but that works too. I’d also accept, completely misunderstood by future generations.

Well now I wanna hear more about the history of this mystical shit button

Back in those early days many applications didn’t have proper timing, they basically just ran as fast as they could. That was fine on an 8mhz cpu as you probably just wanted stuff to run as fast as I could (we weren’t listening to music or watching videos back then). When CPUs got faster (or it could be that it started running at a multiple of the base clock speed) then stuff was suddenly happening TOO fast. The turbo button was a way to slow down the clock speed by some amount to make legacy applications run how it was supposed to run.

Most turbo buttons never worked for that purpose, though, they were still way too fast Like, even ignoring other advances such as better IPC (or rather CPI back in those days) you don’t get to an 8MHz 8086 by halving the clock speed of a 50MHz 486. You get to 25MHz. And practically all games past that 8086 stuff was written with proper timing code because devs knew perfectly well that they’re writing for more than one CPU. Also there’s software to do the same job but more precisely and flexibly.

It probably worked fine for the original PC-AT or something when running PC-XT programs (how would I know our first family box was a 386) but after that it was pointless. Then it hung on for years, then it vanished.

deleted by creator

Also bubbles don’t “leak”.

I mean, sometimes they kinda do? They either pop or slowly deflate, I’d say slow deflation could be argued to be caused by a leak.

We taking about bubbles or are we talking about balloons? Maybe we should change to using the word balloon instead, since these economic ‘bubbles’ can also deflate slowly.

Good point, not sure that economists are human enough to take sense into account, but I think we should try and make it a thing.

deleted by creator

You can do it easily with a balloon (add some tape then poke a hole). An economic bubble can work that way as well, basically demand slowly evaporates and the relevant companies steadily drop in value as they pivot to something else. I expect the housing bubble to work this way because new construction will eventually catch up, but building new buildings takes time.

The question is, how much money (tape) are the big tech companies willing to throw at it? There’s a lot of ways AI could be modified into niche markets even if mass adoption doesn’t materialize.

deleted by creator

You do realize an economic bubble is a metaphor, right? My point is that a bubble can either deflate rapidly (severe market correction, or a “burst”), or it can deflate slowly (a bear market in a certain sector). I’m guessing the industry will do what it can to have AI be the latter instead of the former.

deleted by creator

One good example of a bubble that usually deflates slowly is the housing market. The housing market goes through cycles, and those bubbles very rarely pop. It popped in 2008 because banks were simultaneously caught with their hands in the candy jar by lying about risk levels of loans, so when foreclosures started, it caused a domino effect. In most cases, the fed just raises rates and housing prices naturally fall as demand falls, but in 2008, part of the problem was that banks kept selling bad loans despite high mortgage rates and high housing prices, all because they knew they could sell those loans off to another bank and make some quick profit (like a game of hot potato).

In the case of AI, I don’t think it’ll be the fed raising rates to cool the market (that market isn’t impacted as much by rates), but the industry investing more to try to revive it. So Nvidia is unlikely to totally crash because it’ll be propped up by Microsoft, Amazon, and Google, and Microsoft, Apple, and Google will keep pitching different use cases to slow the losses as businesses pull away from AI. That’s quite similar to how the fed cuts rates to spur economic investment (i.e. borrowing) to soften the impact of a bubble bursting, just driven from mega tech companies instead of a government.

At least that’s my take.

The broader market did the same thing

https://finance.yahoo.com/quote/SPY/

$560 to $510 to $560 to $540

So why did $NVDA have larger swings? It has to do with the concept called beta. High beta stocks go up faster when the market is up and go down lower when the market is done. Basically high variance risky investments.

Why did the market have these swings? Because of uncertainty about future interest rates. Interest rates not only matter vis-a-vis business loans but affect the interest-free rate for investors.

When investors invest into the stock market, they want to get back the risk free rate (how much they get from treasuries) + the risk premium (how much stocks outperform bonds long term)

If the risks of the stock market are the same, but the payoff of the treasuries changes, then you need a high return from stocks. To get a higher return you can only accept a lower price,

This is why stocks are down, NVDA is still making plenty of money in AI

There’s more to it as well, such as:

- investors coming back from vacation and selling off losses and whatnot

- investors expecting reduced spending between summer and holidays; we’re past the “back to school” retail bump and into a slower retail economy

- upcoming election, with polls shifting between Trump and Harris

September is pretty consistently more volatile than other months, and has net negative returns long-term. So it’s not just the Fed discussing rate cuts (that news was reported over the last couple months, so it should be factored in), but just normal sideways trading in September.

We already knew about back to school sales, they happen every year and they are priced in. If there was a real stock market dump every year in September, everyone would short September, making a drop in August and covering in September, making September a positive month again

It’s not every year, but it is more than half the time. Source:

History suggests September is the worst month of the year in terms of stock-market performance. The S&P 500 SPX has generated an average monthly decline of 1.2% and finished higher only 44.3% of the time dating back to 1928, according to Dow Jones Market Data.

S&P 500 up this September officially

Woo! We’re part of the 44% or so. :)

45% now since the data only goes back 100 years

Its all vibes and manipulation

I’ve noticed people have been talking less and less about AI lately, particularly online and in the media, and absolutely nobody has been talking about it in real life.

The novelty has well and truly worn off, and most people are sick of hearing about it.

The hype is still percolating, at least among the people I work with and at the companies of people I know. Microsoft pushing Copilot everywhere makes it inescapable to some extent in many environments, there’s people out there who have somehow only vaguely heard of ChatGPT and are now encountering LLMs for the first time at work and starting the hype cycle fresh.

It’s like 3D TVs, for a lot of consumer applications tbh

Oh fuck that’s right, that was a thing.

Goddamn

3D has been a thing every 15 years or so

3D TVs were a commercial fad once and I haven’t seen them in forever.

2016 may have been the end of them

Yes but 3D is always a thing periodically.

I used shutter glasses with two voodoo2 cards…

I used shutter glasses with the sega master system back in 87. They were rad af

Yes, exactly.

Yeah, now we are gonna get the reality of deep fakes; fun times.

Welp, it was ‘fun’ while it lasted. Time for everyone to adjust their expectations to much more humble levels than what was promised and move on to the next sceme. After Metaverse, NFTs and ‘Don’t become a programmer, AI will steal your job literally next week!11’, I’m eager to see what they come up with next. And with eager I mean I’m tired. I’m really tired and hope the economy just takes a damn break from breaking things.

I just hope I can buy a graphics card without having to sell organs some time in the next two years.

Don’t count on it. It turns out that the sort of stuff that graphics cards do is good for lots of things, it was crypto, then AI and I’m sure whatever the next fad is will require a GPU to run huge calculations.

I’m sure whatever the next fad is will require a GPU to run huge calculations.

I also bet it will, cf my earlier comment on rendering farm and looking for what “recycles” old GPUs https://lemmy.world/comment/12221218 namely that it makes sense to prepare for it now and look for what comes next BASED on the current most popular architecture. It might not be the most efficient but probably will be the most economical.

AI is shit but imo we have been making amazing progress in computing power, just that we can’t really innovate atm, just more race to the bottom.

——

I thought capitalism bred innovation, did tech bros lied?

/s

I’d love an upgrade for my 2080 TI, really wish Nvidia didn’t piss off EVGA into leaving the GPU business…

If there is even a GPU being sold. It’s much more profitable for Nvidia to just make compute focused chips than upgrading their gaming lineup. GeForce will just get the compute chips rejects and laptop GPU for the lower end parts. After the AI bubble burst, maybe they’ll get back to their gaming roots.

My RX 580 has been working just fine since I bought it used. I’ve not been able to justify buying a new (used) one. If you have one that works, why not just stick with it until the market gets flooded with used ones?

But if it doesn’t disrupt it isn’t worth it!

/s

move on to the next […] eager to see what they come up with next.

That’s a point I’m making in a lot of conversations lately : IMHO the bubble didn’t pop BECAUSE capital doesn’t know where to go next. Despite reports from big banks that there is a LOT of investment for not a lot of actual returns, people are still waiting on where to put that money next. Until there is such a place, they believe it’s still more beneficial to keep the bet on-going.

AI doesn’t need to steal all programmer jobs next week, but I have much doubt there will still be many available in 2044 when even just LLMs still have so many things that they can improve on in the next 20 years.

I find it insane when “tech bros” and AI researchers at major tech companies try to justify the wasting of resources (like water and electricity) in order to achieve “AGI” or whatever the fuck that means in their wildest fantasies.

These companies have no accountability for the shit that they do and consistently ignore all the consequences their actions will cause for years down the road.

It’s research. Most of it never pans out, so a lot of it is “wasteful”. But if we didn’t experiment, we wouldn’t find the things that do work.

Most of the entire AI economy isn’t even research. It’s just grift. Slapping a label on ChatGPT and saying you’re an AI company. It’s hustlers trying to make a quick buck from easy venture capital money.

You can probably say the same about all fields, even those that have formal protections and regulations. That doesn’t mean that there aren’t people that have PhD’s in the field and are trying to improve it for the better.

Sure but typically that’s a small part of the field. With AI it’s a majority, that’s the difference.

No, it is the majority in every field.

Specialists are always in the minority, that is like part of their definition.

The majority of every field is fraudsters? Seriously?

Is it really a grift when you are selling possible value to an investor who would make money from possible value?

As in, there is no lie, investors know it’s a gamble and are just looking for the gamble that everyone else bets on, not that it l would provide real value.

I would classify speculation as a form of grift. Someone gets left holding the bag.

I agree, but these researchers/scientists should be more mindful about the resources they use up in order to generate the computational power necessary to carry out their experiments. AI is good when it gets utilized to achieve a specific task, but funneling a lot of money and research towards general purpose AI just seems wasteful.

I mean general purpose AI doesn’t cap out at human intelligence, of which you could utilize to come up with ideas for better resource management.

Could also be a huge waste but the potential is there… potentially.

I don’t think I’ve heard a lot of actual research in the AI area not connected to machine learning (which may be just one component, not really necessary at that).

What’s funny is that we already have general intelligence in billions of brains. What tech bros want is a general intelligence slave.

Well put.

I’m sure plenty of people would be happy to be a personal assistant for searching, summarizing, and compiling information, as long as they were adequately paid for it.

Too much optimism and hype may lead to the premature use of technologies that are not ready for prime time.

— Daron Acemoglu, MIT

Preach!

Personally I can’t wait for a few good bankruptcies so I can pick up a couple of high end data centre GPUs for cents on the dollar

Search Nvidia p40 24gb on eBay, 200$ each and surprisingly good for selfhosted llm, if you plan to build array of gpus then search for p100 16gb, same price but unlike p40, p100 supports nvlink, and these 16gb is hbm2 memory with 4096bit bandwidth so it’s still competitive in llm field while p40 24gb is gddr5 so it’s good point is amount of memory for money it cost but it’s rather slow compared to p100 and compared to p100 it doesn’t support nvlink

Thanks for the tips! I’m looking for something multi-purpose for LLM/stable diffusion messing about + transcoder for jellyfin - I’m guessing that there isn’t really a sweet spot for those 3. I don’t really have room or power budget for 2 cards, so I guess a P40 is probably the best bet?

Try ryzen 8700g integrated gpu for transcoding since it supports av1 and these p series gpus for llm/stable diffusion, would be a good mix i think, or if you don’t have budget for new build, then buy intel a380 gpu for transcoding, you can attach it as mining gpu through pcie riser, linus tech tips tested this gpu for transcoding as i remember

8700g

Hah, I’ve pretty recently picked up an Epyc 7452, so not really looking for a new platform right now.

The Arc cards are interesting, will keep those in mind

Intel a310 is the best $/perf transcoding card, but if P40 supports nvenc, it might work for both transcode and stable diffusion.

Lowest price on Ebay for me is 290 Euro :/ The p100 are 200 each though.

Do you happen to know if I could mix a 3700 with a p100?

And thanks for the tips!

Ryzen 3700? Or rtx 3070? Please elaborate

Oh sorry, nvidia RTX :) Thanks!

I looked it up, rtx 3070 have nvlink capabilities though i wonder if all of them have it, so you can pair it if it have nvlink capabilities

Can it run crysis?

How about cyberpunk?

I ran doom on a GPU!

Personally I don’t much for the LLM stuff, I’m more curious how they perform in Blender.

Interesting, I did try a bit of remote rendering on Blender (just to learn how to use via CLI) so that makes me wonder who is indeed scrapping the bottom of the barrel of “old” hardware and what they are using for. Maybe somebody is renting old GPUs for render farms, maybe other tasks, any pointer of such a trend?

Digging into it a bit more, it seems like I might be better off getting a 12gb 3060 - similar price point, but much newer silicon

It depends, if you want to run llm data center gpus are better, if you want to run general purpose tasks then newer silicon is better, in my case i prefer build to offload tasks, since I’m daily driving linux, my dream build is main gpu is amd rx 7600xt 16gb, Nvidia p40 for llms and ryzen 8700g 780m igpu for transcoding and light tasks, that way you’ll have your usual gaming home pc that also serves as a server in the background while being used

A lot of the AI boom is like the DotCom boom of the Web era. The bubble burst and a lot of companies lost money but the technology is still very much important and relevant to us all.

AI feels a lot like that, it’s here to stay, maybe not in th ways investors are touting, but for voice, image, video synthesis/processing it’s an amazing tool. It also has lots of applications in biotech, targetting systems, logistics etc.

So I can see the bubble bursting and a lot of money being lost, but that is the point when actually useful applications of the technology will start becoming mainstream.

The bubble burst and a lot of companies lost money but the technology is still very much important and relevant to us all.

The DotCom bubble was built around the idea of online retail outpacing traditional retail far faster than it did, in fact. But it was, at its essence, a system of digital book keeping. Book your orders, manage your inventory, and direct your shipping via a more advanced and interconnected set of digital tools.

The fundamentals of the business - production, shipping, warehousing, distribution, the mathematical process of accounting - didn’t change meaningfully from the days of the Sears-Roebuck Catalog. Online was simply a new means of marketing. It worked well, but not nearly as well as was predicted. What Amazon did to achieve hegemony was to run losses for ten years, while making up the balance as a government sponsored series of data centers (re: AWS) and capitalize on discount bulk shipping through the USPS before accruing enough physical capital to supplant even the big box retailers. The digital front-end was always a loss-leader. Nobody is actually turning a profit on Amazon Prime. It’s just a hook to get you into the greater Amazon ecosystem.

Pivot to AI, and you’ve got to ask… what are we actually improving on? It’s not a front-end. It’s not a data-service that anyone benefits from. It is hemorrhaging billions of dollars just at OpenAI alone (one reason why it was incorporated as a Non-Profit to begin with - THERE WAS NO PROFIT). Maybe you can leverage this clunky behemoth into… low-cost mass media production? But its also extremely low-rent production, in an industry where - once again - marketing and advertisement are what command the revenue you can generate on a finished product. Maybe you can use it to optimize some industrial process? But it seems that every AI needs a bunch of human babysitters to clean up all the shit is leaves. Maybe you can get those robo-taxis at long last? I wouldn’t hold my breath, but hey, maybe?!

Maybe you can argue that AI provides some kind of hook to drive retail traffic into a more traditional economic model. But I’m still waiting to see what that is. After that, I’m looking at AI in the same way I’m looking at Crypto or VR. Just a gimmick that’s scaring more people off than it drags in.

I don’t mean it’s like the dotcom bubble in terms of context, I mean in terms of feel. Dotcom had loads of investors scrambling to “get in on it” many not really understanding why or what it was worth but just wanted quick wins.

This has same feel, a bit like crypto as you say but I would say crypto is very niche in real world applications at the moment whereas AI does have real world usages.

They are not the ones we are being fed in the mainstream like it replacing coders or artists, it can help in those areas but it’s just them trying to keep the hype going. Realistically it can be used very well for some medical research and diagnosis scenarios, as it can correlate patterns very easily showing likelyhood of genetic issues.

The game and media industry are very much trialling for voice and image synthesis for improving environmental design (texture synthesis) and providing dynamic voice synthesis based off actors likenesses. We have had peoples likenesses in movies for decades via cgi but it’s only really now we can do the same but for voices and this isn’t getting into logistics and/or financial where it is also seeing a lot of application.

Its not going to do much for the end consumer outside of the guff you currently use siri or alexa for etc, but inside the industries AI is very useful.

crypto is very niche in real world applications at the moment whereas AI does have real world usages.

Crypto has a very real niche use for money laundering that it does exceptionally well.

AI does not appear to do anything significantly more effectively than a Google search circa 2018.

But neither can justify a multi billion dollar market cap on these terms.

The game and media industry are very much trialling for voice and image synthesis for improving environmental design (texture synthesis) and providing dynamic voice synthesis based off actors likenesses. We have had peoples likenesses in movies for decades via cgi but it’s only really now we can do the same but for voices and this isn’t getting into logistics and/or financial where it is also seeing a lot of application.

Voice actors simply don’t cost that much money. Procedural world building has existed for decades, but it’s generally recognized as lackluster beside bespoke design and development.

These tools let you build bad digital experiences quickly.

For logistics and finance, a lot of what you’re exploring is solved with the technology that underpins AI (modern graph theory). But LLMs don’t get you that. They’re an extraneous layer that takes enormous resources to compile and offers very little new value.

I disagree, there are loads of white papers detailing applications of AI in various industries, here’s an example, cba googling more links for you.

there are loads of white papers detailing applications of AI in various industries

And loads more of its ineffectual nature and wastefulness.

Are you talking specifically about LLMs or Neural Network style AI in general? Super computers have been doing this sort of stuff for decades without much problem, and tbh the main issue is on training for LLMs inference is pretty computationally cheap

Super computers have been doing this sort of stuff for decades without much problem

Idk if I’d point at a supercomputer system and suggest it was constructed “without much problem”. Cray has significantly lagged the computer market as a whole.

the main issue is on training for LLMs inference is pretty computationally cheap

Again, I would not consider anything in the LLM marketplace particularly cheap. Seems like they’re losing money rapidly.

The funny thing about Amazon, is we are phasing it out of our home now. Because it has become an online 7Eleven. You don’t pay for shipping and it comes fast, but you are often paying 50-100% more for everything. If you use AliExpress, 300-400% more… just to get it a week or two faster. I would rather go to local retailers that are increasing Chinese goods for a 150% profit, than Amazon and pay 300%. It just means I have to leave the house for 30 minutes.

would rather go to local retailers that are increasing Chinese goods for a 150% profit, than Amazon and pay 300%

A lot of the local retailors are going out of business in my area. And those that exist are impossible to get into and out of, due to the fixation on car culture. The Galleria is just a traffic jam that spans multiple city blocks.

The thing that keeps me at Amazon, rather than Target, is purely the time visit of shopping versus shipping.

I’m glad someone else is acknowledging that AI can be an amazing tool. Every time I see AI mentioned on lemmy, people say that it’s entirely useless and they don’t understand why it exists or why anyone talks about it at all. I mention I use ChatGPT daily for my programming job, it’s helpful like having an intern do work for me, etc, and I just get people disagreeing with me all day long lol

Google Search is such an important facet for Alphabet that they must invest as many billions as they can to lead the new generative-AI search. IMO for Google it’s more than just a growth opportunity, it’s a necessity.

I guess I don’t really see why generative AI is a necessity for a search engine? It doesn’t really help me find information any faster than a Wikipedia summary, and is less reliable.

So far…

Obviously still has fair share of dumb stuff happening with these systems today, but there have been some big steps in just the last few years. Wouldn’t be surprised if it was much spookier a decade from now.

In general, good to use as a tool to be taken with grain of salt and further review.

Removed by mod

Pop pop!

Magnitude!

Argument!

FMO is the best explanation of this psychosis and then of course denial by people who became heavily invested in it. Stuff like LLMs or ConvNets (and the likes) can already be used to do some pretty amazing stuff that we could not do a decade ago, there is really no need to shit rainbows and puke glitter all over it. I am also not against exploring and pushing the boundaries, but when you explore a boundary while pretending like you have already crossed it, that is how you get bubbles. And this again all boils down to appeasing some cancerous billionaire shareholders so they funnel down some money to your pockets.

there is really no need shit rainbows and puke glitter all over it

I’m now picturing the unicorn from the Squatty Potty commercial, with violent diarrhea and vomiting.

Stuff like LLMs or ConvNets (and the likes) can already be used to do some pretty amazing stuff that we could not do a decade ago, there is really no need to shit rainbows and puke glitter all over it.

I’m shitting rainbows and puking glitter on a daily basis BUT it’s not against AI as a field, it’s not against AI research, rather it’s against :

- catastrophism and fear, even eschatology, used as a marketing tactic

- open systems and research that become close

- trying to lock a market with legislation

- people who use a model, especially a model they don’t even have e.g using a proprietary API, and claim they are an AI startup

- C-levels decision that anything now must include AI

- claims that this or that skill is soon to be replaced by AI with actually no proof of it

- meaningless test results with grand claim like “passing the bar exam” used as marketing tactics

- claims that it scales, it “just needs more data”, not for .1% improvement but for radical change, e.g emergent learning

- for-profit (different from public research) scrapping datasets without paying back anything to actual creators

- ignoring or lying about non renewable resource consumption for both training and inference

- relying on “free” or loss leader strategies to dominate a market

- promoting to be doing the work for the good of humanity then signing exclusive partnership with a corporation already fined for monopoly practices

I’m sure I’m forgetting a few but basically none of those criticism are technical. None of those criticism is about the current progress made. Rather, they are about business practices.

deleted by creator

But the trillion dollar valued Nvidia…

I think they’re going to be bankrupt within 5 years. They have way too much invested in this bubble.

Fall in share price, yes.

Bankrupt, no. Their debt to Equity Ratio is 0.1455. They can pay off their $11.23 B debt with 2 months of revenue. They can certainly afford the interest payments.

I highly doubt that. If the AI bubble pops, they’ll probably be worth a lot less relative to other tech companies, but hardly bankrupt. They still have a very strong GPU business, they probably have an agreement with Nintendo on the next Switch (like they did with the OG Switch), and they could probably repurpose the AI tech in a lot of different ways, not to mention various other projects where they package GPUs into SOCs.

It really depends on how much they’ve invested in building AI chips.

Sure, but their deliveries have also been incredibly large. I’d be surprised if they haven’t already made enough from previous sales to cover all existing and near-term investments into AI. The scale of the build-out by big cloud firms like Amazon, Google, and Microsoft has been absolutely incredible, and Nvidia’s only constraint has been making enough of them to sell. So even if support completely evaporates, I think they’ll be completely fine.

They don’t build the chips at all. They pay tsmc.

NVIDIA uses of AI technology aren’t going to pop, things like DLSS are here to stay. The value of the company and their sales are inflated by the bubble, but the core technology of NVIDIA is applicable way beyond the chat bot hype.

Bubbles don’t mean there’s no underlying value. The dot com bubble didn’t take down the internet.

Maybe we can have normal priced graphics cards again.

I’m tired of people pretending £600 is a reasonable price to pay for a mid range GPU.

I’m not sure, these companies are building data centers with so many gpus that they have to be geo located with respect to the power grid because if it were all done in one place it would take the grid down.

And they are just building more.

But the company doesn’t have the money. Stock value means investor valuation, not company funds.

Once a company goes public for the very first time, it’s getting money into its account, but from then on forward, that’s just investors speculating and hoping on a nice return when they sell again.

Of course there should be some correlation between the company’s profitability and the stock price, so ideally they do have quite some money, but in an investment craze like this, the correlation is far from 1:1. So whether they can still afford to build the data centers remains to be seen.

Yeah, someone else commented with their financials and they look really good, so while I certainly agree that they are overvalued because we are in an AI training bubble, I don’t see it popping for a few years, especially given that they are selling the shovels. every big player in the space is set on orders of magnitude of additional compute for the next 2 years or more. It doesn’t matter if the company they sold gpus to fails if they already sold them. Something big that unexpected would have to happen to upset that trajectory right now and I don’t see it because companies are in the exploratory stage of ai tech so no one knows what doesn’t work until they get the computer they need. I could be wrong, but that’s what I see as a watcher of ai news channels on YouTube.

The co founder of open AI just got a billion dollars for his new 3 month old AI start up. They are going to spend that money on talent and compute. X just announced a data center with 100,000 gpus for grok2 and plans to build the largest in the world I think? But that’s Elon, so grains of salt and all that are required there. Nvidia are working with robotics companies to make AI that can train robots virtually to do a task and in the real world a robot will succeed first try. No more Boston dynamics abuse compilation videos. Right now agentic ai workflow is supposed to be the next step, so there will be overseer ai algorithms to develop and train.

All that is to say there is a ton of work that requires compute for the next few years.

{Opinion here} – I feel like a lot of people are seeing grifters and a wobbly gpt4o launch and calling the game too soon. It takes time to deliver the next product when it’s a new invention in its infancy and the training parameters are scaling nearly logarithmically from gen to gen.

I’m sure the structuring of payment for the compute devices isn’t as simple as my purchase of a gaming GPU from microcenter, but Nvidia are still financially sound. I could see a lot of companies suffering from this long term but nvidia will be The player in AI compute, whatever that looks like, so they are going to bounce back and be fine.

Couldn’t agree more. There is quite a bit of AI vaporware but NVIDIA is the real stuff and will weather whatever storm comes with ease.

They’re not building them for themselves, they’re selling GPU time and SuperPods. Their valuation is because there’s STILL a lineup a mile long for their flagship GPUs. I get that people think AI is a fad, and it’s public form may be, but there’s thousands of GPU powered projects going on behind closed doors that are going to consume whatever GPUs get made for a long time.

Their valuation is because there’s STILL a lineup a mile long for their flagship GPUs.

Genuinely curious, how do you know where the valuation, any valuation, come from?

This is an interesting story, and it might be factually true, but as far as I know unless someone has actually asked the biggest investor WHY they did bet on a stock, nobody why a valuation is what it is. We might have guesses, and they might even be correct, but they also change.

I mentioned it few times here before but my bet is yes, what you did mention BUT also because the same investors do not know where else do put their money yet and thus simply can’t jump boats. They are stuck there and it might again be become they initially though the demand was high with nobody else could fulfill it, but I believe that’s not correct anymore.

but I believe that’s not correct anymore.

Why do you believe that? As far as I understand, other HW exists…but no SW to run on it…

Right, and I mentioned CUDA earlier as one of the reason of their success, so it’s definitely something important. Clients might be interested in e.g Google TPU, startups like Etched, Tenstorrent, Groq, Cerebras Systems or heck even design their own but are probably limited by their current stack relying on CUDA. I imagine though that if backlog do keep on existing there will be abstraction libraries, at least for the most popular ones e.g TensorFlow, JAX or PyTorch, simply because the cost of waiting is too high.

Anyway what I meant isn’t about hardware or software but rather ROI, namely when Goldman Sachs and others issue analyst report saying that the promise itself isn’t up to par with actual usage for paying customers.

Those reports might effect investments from the smaller players, but the big names(Google, Microsoft, Meta, etc.) are locked in a race to the finish line. So their investments will continue until one of them reaches the goal…[insert sunk cost fallacy here]…and I think we’re at least 1-2 years from there.

Edit: posted too soon

Well, I’m no stockologist, but I believe when your company has a perpetual sales backlog with a 15-year head start on your competition, that should lead to a pretty high valuation.

I’m also no stockologist and I agree but I that’s not my point. The stock should be high but that might already have been factored in, namely this is not a new situation, so theoretically that’s been priced in since investors have understood it. My point anyway isn’t about the price itself but rather the narrative (or reason, as the example you mention on backlog and lack of competition) that investors themselves believe.

$2.5T currently to be exact

Nvidia is diversified in AI, though. Disregarding LLM, it’s likely that other AI methodologies will depend even more on their tech or similar.

Argh, after 25 years in tech I am surprised this keeps surprising you.

We’ve crested for sure. AI isn’t going to solve everything. AI stock will fall. Investor pressure to put AI into everything will subside.

The we will start looking at AI as a cost benefit analysis. We will start applying it where it makes sense. Things will get optimised. Real profit and long term change will happen over 5-10 years. And afterwards, the utter magical will seem mundane while everyone is chasing the next hype cycle.

Truth. I would say the actual time scales will be longer, but this is the harsh, soul-crushing reality that will make all the kids and mentally disturbed cultists on r/singularity scream in pain and throw stones at you. They’re literally planning for what they’re going to do once ASI changes the world to a star-trek, post-scarcity civilization… in five years. I wish I was kidding.

I’m far far more concerned about all the people who were deemed non essential so quickly after being “essential” for so long because AI will do so much work slaps employees with 2 weeks severance

I’m right there with you. One of my daughters love drawing and designing clothes and I don’t know what to tell her in terms of the future. Will human designs be more valued? Less valued?

I’m trying to remain positive; when I went into software my parents barely understood that anyone could make a living of that “toy computer”.

But I agree; this one feels different. I’m hoping they all feel different to the older folks (me).